Anti-Bot Systems: How Do They Work and Can They Be Bypassed?

Anti-bot systems are technologies designed to protect websites from automated interactions, such as spam or DDoS attacks. However, not all automated activities are harmful: for instance, bots are sometimes necessary for security testing, building search indexes, and collecting data from open sources. To perform such tasks without being blocked by anti-bot systems, you will need specialized tools.

To be able to bypass an anti-bot system, it's essential to understand what the different types of protection are and how they function.

How Do Anti-Bot Systems Detect Bots?

Anti-bot systems gather a significant amount of information about each website visitor. This information is analyzed, and if any parameters seem uncharacteristic of human users, the suspicious visitor might be blocked or asked to solve a CAPTCHA to prove they are, in fact, of human origin.

This information is usually collected on three levels: network, behavioral, and browser fingerprint.

- The Network Level: Anti-bot systems analyze requests, check the spam score of IP addresses, and inspect packet headers. Visitors whose IP addresses appear on “blacklists,” belong to datacenters, are associated with the Tor network, or look suspicious in other ways might face a CAPTCHA challenge. You probably have experienced this in your life when Google sent you to solve a CAPTCHA just because you were using a free VPN service.

- Browser Fingerprint Level: Anti-bot systems gather information about the browser and the device used to access the website, creating a corresponding device fingerprint. This fingerprint typically includes the type, version, and language settings of the browser, screen resolution, window size, hardware noise, system fonts, media devices, and more.

- Behavioral Level: Some advanced systems examine how closely a user's actions match the behavior of regular website visitors.

There are many anti-bot systems, and the specifics of each can vary greatly and change over time. Popular solutions include:

- Akamai

- Cloudflare

- Datadome

- Incapsula

- Casada

- Perimeterx

Understanding which anti-bot system protects a website can be important for choosing the best bypassing strategy. You will find entire sections dedicated to bypassing specific anti-bot systems on specialized forums and Discord channels. For example, such information can be found on The Web Scraping Club.

To identify which anti-bot system a website uses, you can use tools like the Wappalyzer browser extension.

How to Bypass Anti-Bot Systems?

To prevent the system from detecting automation, it's necessary to ensure a sufficient level of masking at each detection level. This can be achieved in several ways:

- By using your own custom-made solutions and maintaining the infrastructure independently;

- By using paid services like Apify, Scrapingbee, Browserless, or Surfsky;

- By combining high-quality proxies, CAPTCHA solvers, and anti-detect browsers;

- By using standard browsers in headless mode with anti-detection patches;

- Or by using many other options of varying complexity.

Network-Level Masking

To protect a bot at the network level, it's essential to use high-quality proxies. Sure, simple tasks might be accomplished using just your own IP address, but this approach is unlikely to be feasible if you intend to collect a significant amount of data. You will need good residential or mobile proxies that haven't been blacklisted to send tens of thousands of requests regularly.

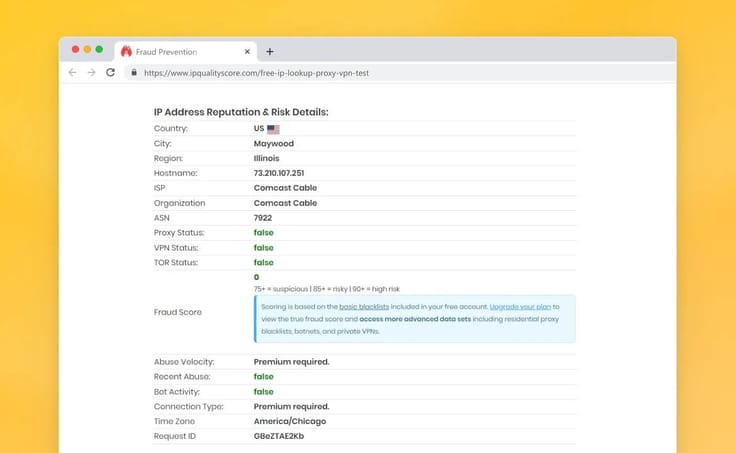

Checking the IP address using IPQualityScore

When choosing a proxy, pay attention to the following parameters:

- Whether its IP address appears in spam databases. This can be checked with tools like PixelScan or by consulting the iplists.firehol.org database.

- Whether there are any DNS leaks. When testing using any suitable checker like DNS Leak Test, your real server should not appear on the server list.

- The proxy provider type. Proxies belonging to ISPs are less suspicious.

You can learn more about checking the proxy quality here.

Rotating proxies are also useful for web scraping. They provide many IP addresses, instead of just one, reducing the chance that a bot collecting information will be blocked, as it's harder for the website to find patterns in the requests. Rotating proxies distribute requests among many IP addresses, lowering the blocking risks due to a large number of requests from a single IP.

Fingerprint-Level Masking

Multi-accounting (anti-detect) browsers are perfect for spoofing browser fingerprints. The top quality ones, like Octo Browser, spoof the fingerprint at the browser kernel level and allow you to create a large number of browser profiles, each looking like a separate user.

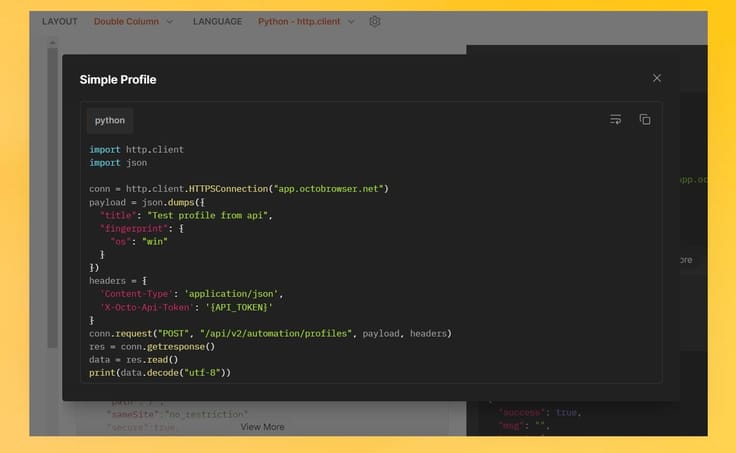

Configuring the digital fingerprint of an Octo Browser profile

Scraping data with an anti-detect browser can be done with the help of any convenient browser automation library or framework. You can create the desired number of profiles with the necessary fingerprint settings, proxies, and cookies, without having to open the browser itself. Later, these can be used either in automation mode or manually.

Working with a multi-accounting browser is not a lot different from using a regular browser in headless mode. Octo Browser provides detailed documentation with step-by-step instructions on connecting to the API for all popular programming languages.

An example of creating an Octo browser profile using Python

Professional anti-detect browsers allow you to conveniently manage a large number of browser profiles, connect proxies, and access data that is not normally available with standard scraping methods thanks to an advanced system of digital fingerprint spoofing.

Simulating Real User Actions

To circumvent anti-bot systems, it's also necessary to simulate actions of real users: delays, cursor movement emulation, rhythmic key presses, random pauses, and irregular behavior patterns. You will often need to perform actions like authorization, clicking "Read more" buttons, following links, submitting forms, scrolling through feeds, etc.

User actions can be simulated using popular open-source solutions for browser automation like Selenium, though other options exist too, such as MechanicalSoup, Nightmare JS, and others.

To make scraping appear more natural to anti-bot systems, it is advisable to add delays with irregular intervals to the requests.

Conclusions

Anti-bot systems protect websites from automated interactions by analyzing network, browser, and behavioral information about the user. To bypass these systems, each of these levels requires adequate masking.

- At the network level, you can use high-quality proxies, especially rotating ones.

- For spoofing the browser fingerprint, you can use multi-accounting anti-detect browsers like Octo Browser.

- To simulate real user actions, you can use browser automation tools such as Selenium, additionally incorporating irregular delays and behavior patterns.

Looking to boost your web scraping setup with top-tier anti-detect tools?

Octo Browser is your ideal solution. It offers cutting-edge fingerprint spoofing and effortless multi-account management.

Use the promo code PROXYSCRAPE for a free 4-day Base subscription to Octo Browser for new users. Don’t miss this opportunity to elevate your web scraping game!

Happy scraping!