Ethics In Web Scraping

Web scraping is not a new concept as the whole Internet is based on it. For instance, when you share a Youtube video’s link on Facebook, its data gets scraped so that people can see the video’s thumbnail in your post. Thus there are endless ways to use data scraping for everybody’s benefit. But there

Table of Contents

- Web Scraping Code of Conduct

- Web Scraping Ethical Considerations

- Don’t Steal the Data

- Don’t Break the Web

- Ask and Share

- Better be safe than sorry

- Proxies For Ethical Web Scraping Choosing Proxies

- Choosing Proxies

- Conclusion on Web Scraping Ethics

Web scraping is not a new concept as the whole Internet is based on it. For instance, when you share a Youtube video’s link on Facebook, its data gets scraped so that people can see the video’s thumbnail in your post. Thus there are endless ways to use data scraping for everybody’s benefit. But there are some ethical aspects involved in scraping data from the web.

Suppose you apply for a health insurance plan, and you gladly give your personal information to the provider in exchange for the service they provide. But what if some stranger does web scraping magic with your data and uses it for personal purposes. Things can start getting inappropriate, right? Here comes the need for practicing ethical web scraping.

In this article, we will discuss the web scraping code of conduct and the legal and ethical considerations.

Web Scraping Code of Conduct

For practicing legal web scraping, you need to adhere to the following simple rules.

Don’t break the Internet – You need to know that not all websites can withstand thousands of requests per second. Some websites allow that, but others may block you if you send multiple requests using the same IP address. For instance, if you write a scraper that follows hyperlinks, you should test it on a smaller dataset first and ensure it does what it is supposed to do. Further, you need to adjust the settings of your scraper to allow for a delay between requests.

View robots.txt file – The websites use robots.txt files to let bots know whether the site can be crawled or not. When extracting data from the web, you need to critically understand and respect the robots.txt file to avoid legal ramifications.

Share what you can – If you get permission to scrape the data in the public domain and scrape it, you can put it out there (e.g., on datahub.io) for other people to reuse it. If you write a web scraper, you can share its code (e.g., on Github) so that others can benefit from it.

Don’t share downloaded content illegally – It is sometimes OK to scrape the data for personal purposes, even if the information is copyrighted. However, it is illegal to share data of which you don’t hold the right to share.

You can ask nicely – If you need data from a particular organization for your project, you can ask them directly if they could provide you with the data you desire. Else you can also use the organization’s primary information on its website and save yourself from the trouble of creating a web scraper.

Web Scraping Ethical Considerations

You need to keep in mind the below ethics while scraping data from the web.

Don’t Steal the Data

You need to know that web scraping can be illegal in certain circumstances. If the terms and conditions of the website that we want to scrape prohibit users from copying and downloading the content, then we should not scrape that data and respect the terms of that website.

It is OK to scrape the data that is not behind the password-protected authentication system ( publicly available data), keeping in mind that you don’t break the website. However, it can be a potential problem if you share the scraped data further. For instance, if you download content from one website and post it on another website, your scraping will be considered illegal and constitute a copyright violation.

Don’t Break the Web

Whenever you write a web scraper, you query a website repeatedly and potentially access its large number of pages. For each page, a request is sent to the web server that hosts the site. The server processes the request and sends a response back to the computer that runs the code. The requests that we send consume the server’s resources. So, if we send too many requests over a short span of time, we can prevent the other regular users from accessing the site during that time.

The hackers often make Denial of Service (DoS) attacks to shut down the network or machine, making it inaccessible to the intended users. They do this by sending information to the server that triggers a crash or by flooding the target website with traffic.

Most modern web servers include measures for warding off the illegitimate use of their resources, as DoS attacks are common on the Internet. They are vigilant for large numbers of requests coming from a single IP address. They can block that address if it sends multiple requests over a short time interval.

Ask and Share

It is worthwhile to ask the curators or the owners of the data you plan to scrape, depending on the scope of your project. You can ask them if they have data available in a structured format that can fit your project needs. If you want to use their data for research purposes in a manner that could potentially interest them, you can save yourself from the trouble of writing a web scraper.

You can also save others from the trouble of writing a web scraper. For instance, if you publish your data or documentation as part of the research project, someone might want to get your data for use. If you want, you can provide others with a way to download your raw data in a structured format, thus saving t

Better be safe than sorry

Data privacy and copyright legislation differ from country to country. You need to check the laws that apply in your context. For example, in countries like Australia, it is illegal to scrape personal information like phone numbers, email addresses, and names even if they are publicly available.

You should adhere to the web scraping code of conduct to scrape data for your personal use. However, if you want to harvest large amounts of data for commercial or research purposes, you probably have to seek legal advice.

Proxies For Ethical Web Scraping

You know that proxies have a wide variety of applications. Their primary purpose is to hide the IP address and the user’s location. Proxies also allow users to access geo-restricted content when surfing the Internet. Thus, the users can access the hidden pages as proxies bypass the content and geo-restrictions.

You can use proxies to maximize the scraper’s output as they reduce the block rates. Without them, you can scrape minimal data from the web. It is because proxies surpass crawl rates allowing spiders to extract more data. The crawl rate indicates the number of requests you can send in a given timeframe. This rate varies from site to site.

Choosing Proxies

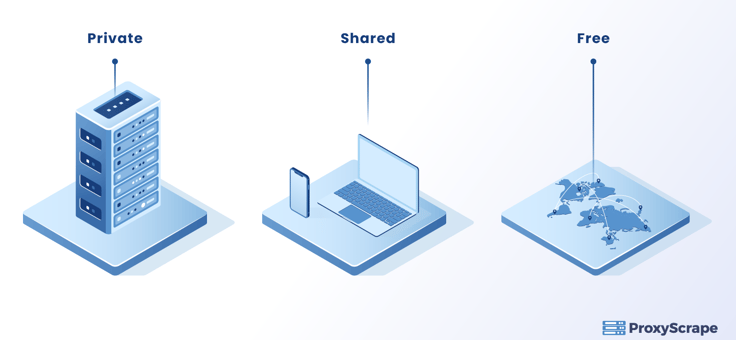

You can choose proxies depending on your project requirements. You can either use a private proxy or a shared proxy.

- Private proxies are the best if your project needs high performance and maximized connection.

- Shared proxies perform well when you do a small-scale project with a limited budget.

- Free proxies are discouraged when extracting data from the web. It is because they are open to the public and are often used for illegal activities.

You can identify the IP sources apart from choosing proxies for your project. There are three categories of proxy servers.

Datacenter Proxies – These are the cheapest and most practical proxies for web scraping. These IPs are created on independent servers and efficiently used to accomplish large-scale scraping projects.

Residential Proxies – They can be hard to obtain as they are affiliated with third parties.

Mobile Proxies – They are the most expensive and are great to use if you have to collect data that is only visible on mobile devices.

Conclusion on Web Scraping Ethics

So far, we discussed that you can extract data from the Internet by keeping in mind the legal and ethical considerations. For instance, you should not steal data from the web. You can not share the data to which you don’t hold the right. If you need an organization’s data for your project, you can nicely ask them if they could share their raw data in a structured format. Else you can write your web scraper to extract data from the website if they allow. Further, we discussed that you can choose different proxies depending on your project needs. You can use the datacenter or residential IPs as they are widely used for web scraping.